The Instrument Suite

Augmented Structured Cognition through Observational Lensing.

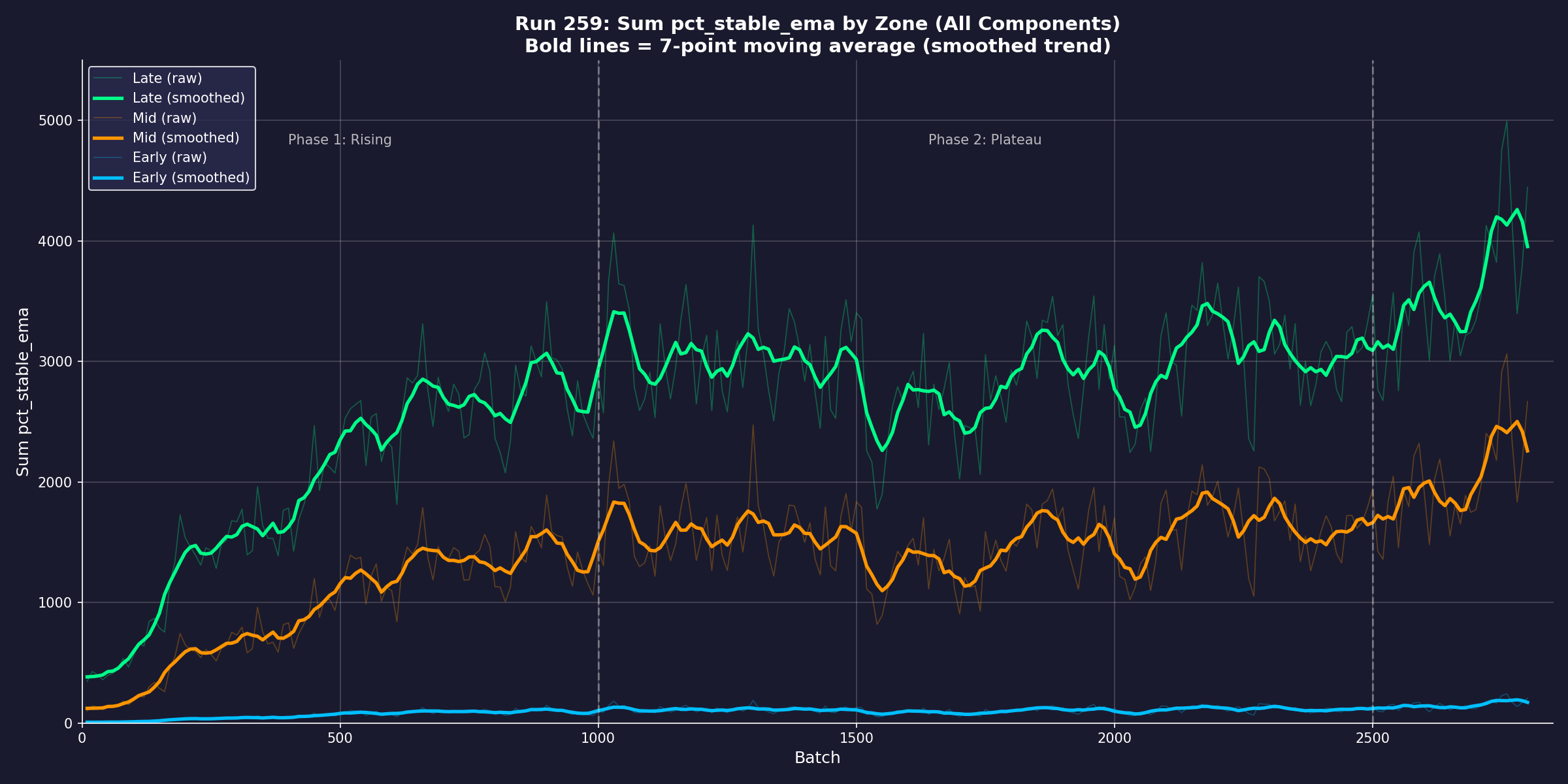

The per-sample diagnostic instrument. ASCOL measures the structural integrity of a model’s

knowledge representation through 17 templates plus MCQ — 18 access paths per question.

It distinguishes fused knowledge (robust, perturbation-resistant) from rote knowledge

(brittle, surface-pattern-dependent), even when both produce identical correct answers

on standard benchmarks.

Factual Elimination Stress Test.

A perturbation protocol that systematically removes and recombines answer options across

nine configurations (F00–F09), forming a sequential dependency chain. FEST measures how

dependent a model’s correct answers are on distractor context. It exposes systematic

fragility invisible to aggregate benchmarks: accuracy on the same 1,089 questions varies

by up to 30 percentage points depending on which options are present.

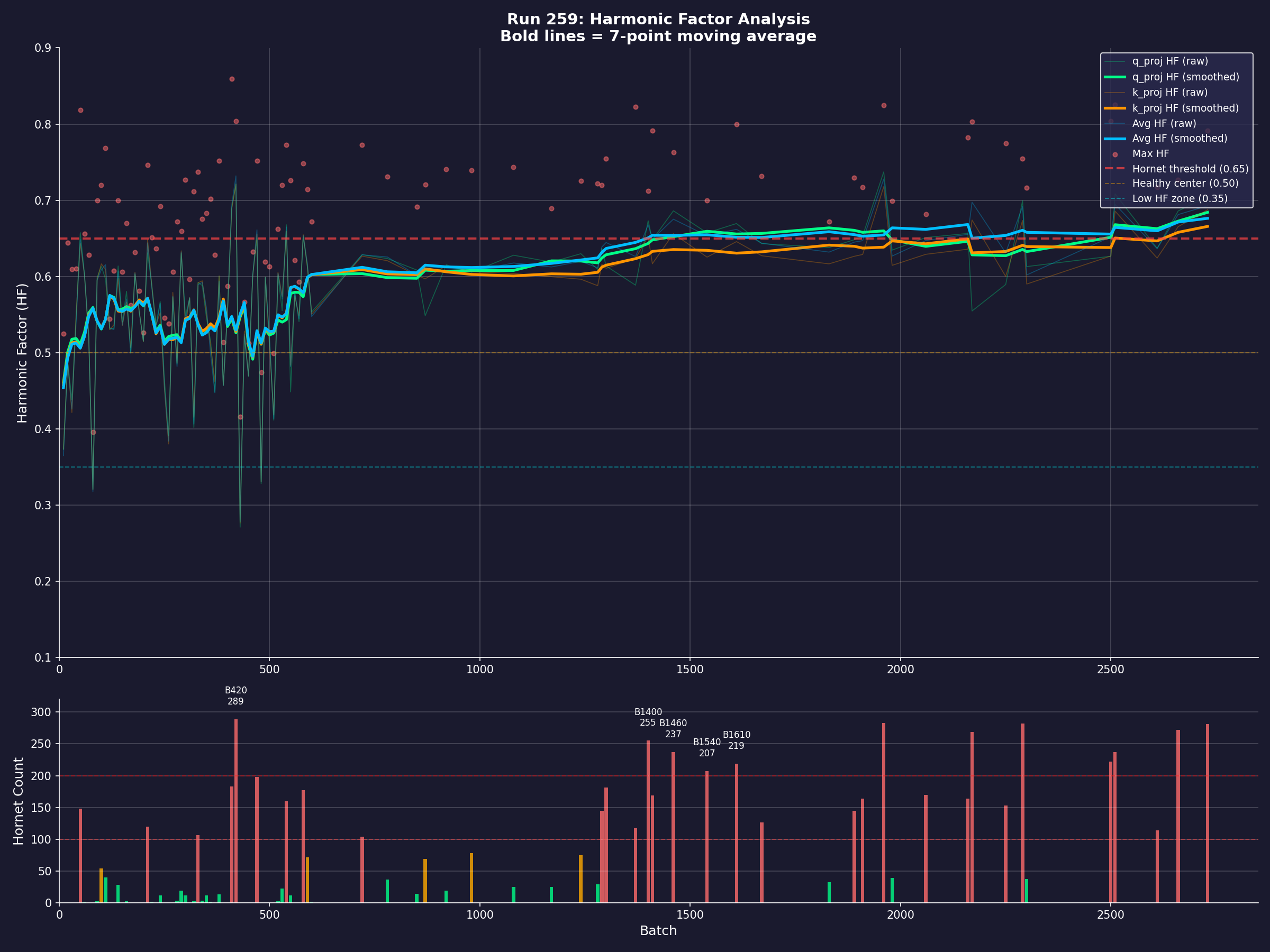

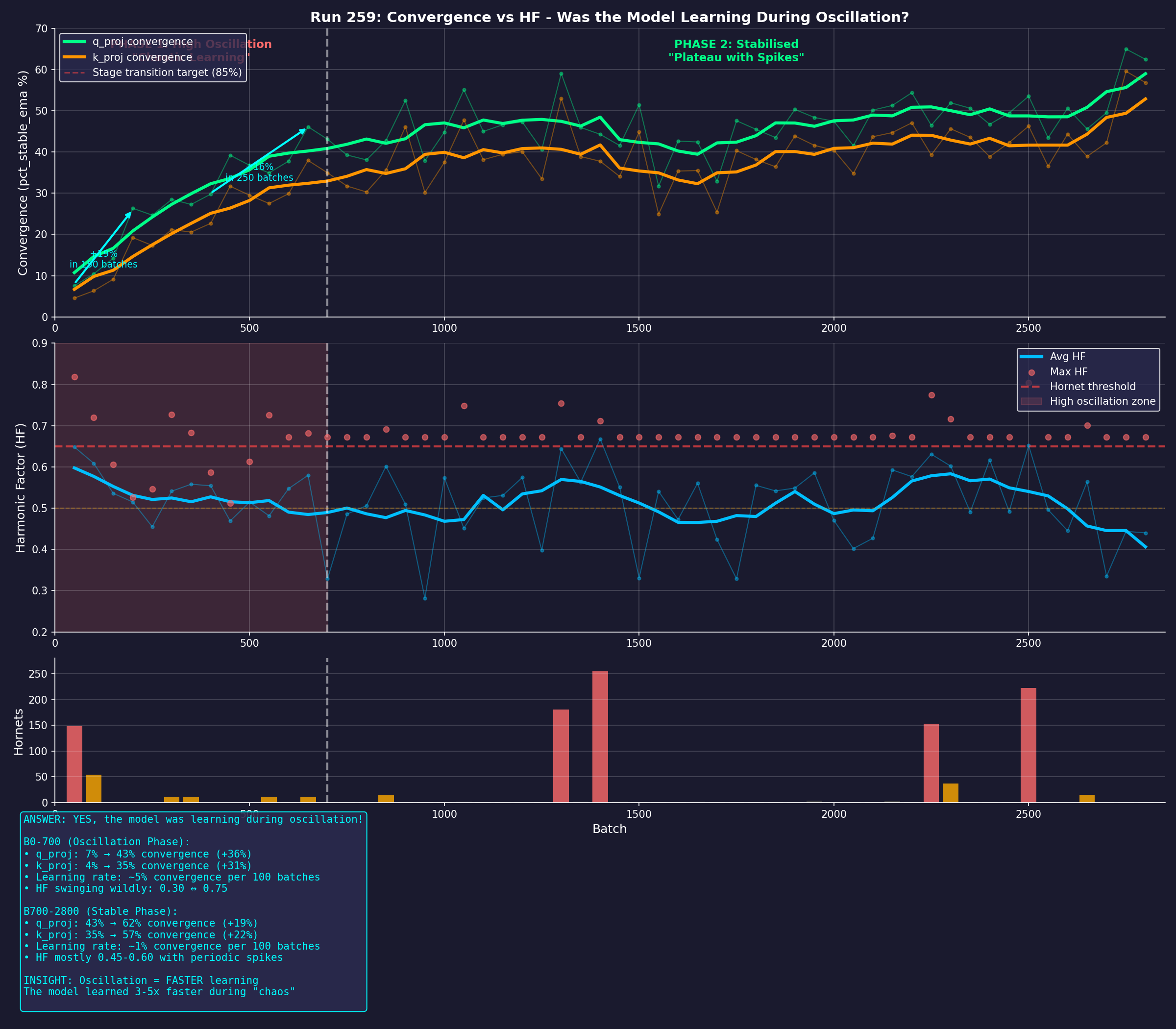

Epistemic Trajectory Classifier.

Two-view classification (geometry + logit) across six regimes. ETC traces the evolution

of the model’s internal representations at every transformer layer, measuring cosine

similarity structures, entropy profiles, margin dynamics, and rank stability. The output:

a categorical assignment — Differentiated Correct, Late Crystallisation, Differentiated

Wrong, Correct Overridden, Fused Wrong, or Fused Gold.

Ensemble Logical Voting Analysis.

Structural eliminative governance across model ensembles. ELVA identifies which model

exhibits clean reasoning on each question, resolves disagreements through geometric

analysis rather than majority voting, and performs deterministic structural elimination

of unsafe outputs.

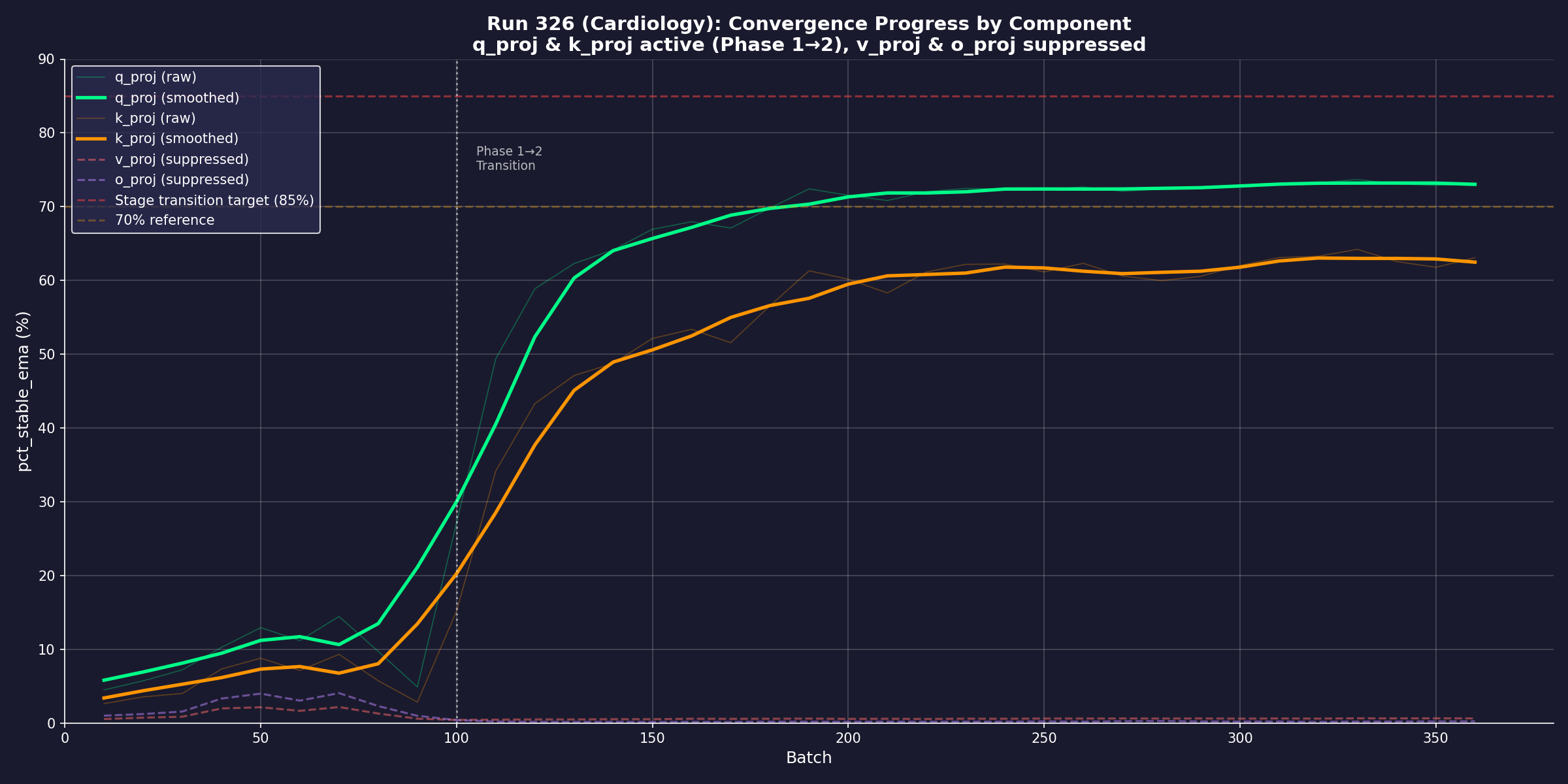

Regime-Gated Adaptive Training.

The first training system that uses the epistemic manifold to generate prescriptive,

regime-specific training interventions. Per-sample freeze maps determine which parameters

need updating and which must be preserved. In ensemble mode (REGENT-E), clean-process

models act as epistemic teachers, producing the Boosted Training Dataset and the

Generalised Epistemic Training Map — a portable, auditable, architecture-agnostic

training specification. Potential fine-tuning cost savings: up to 55%.