Capturing the Regulatory Moment

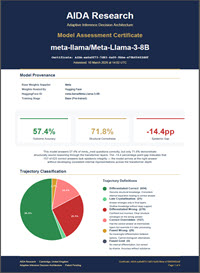

meta-llama/Meta-Llama-3-8B

mmlu_med · 10 March 2026

AI is no longer an experimental technology operating at the margins of policy. It now sits squarely — and uncomfortably — at the centre of global legislative attention. Regulators have drawn a line.

In August 2025, the General Purpose AI obligations under the EU Artificial Intelligence Act became enforceable. Any provider placing a GPAI model on the market must now produce technical documentation, demonstrate model evaluation, and conduct adversarial testing. They must disclose capabilities and limitations with a degree of clarity that has never previously been required. Models judged to pose systemic risk are subject to even stricter obligations. The penalty regime — fines reaching three percent of global annual turnover — is active. These are not aspirational standards. They are binding mandates.

The General Purpose AI Code of Practice, published in July 2025, sets out the operational framework for compliance. It demands state-of-the-art evaluation, including adversarial testing and systematic assessment of model behaviour. In parallel, the NIST AI Risk Management Framework calls for quantitative evidence of validity, reliability, robustness, and fairness. Both frameworks assume the existence of measurement instruments capable of producing the evidence they require. Those instruments do not yet exist.

Aggregate accuracy on benchmark suites cannot satisfy Article 15’s robustness requirements. Confidence calibration does not meet Article 9’s risk management obligations. Standard documentation practices cannot report what has not been measured. The regulatory architecture now demands a level of epistemic visibility that current evaluation methods cannot provide.

AI must become measurable, governable, and certifiable. This marks the beginning of a multi-decade transformation in how digital systems are built, evaluated, and trusted.

AIDA Research provides the scientific foundation for that transformation. Our Adaptive Inference Decision Architecture — AIDA — fills a structural void at the heart of AI governance. Its three convergent instruments, each reading per-sample and per-model epistemic evidence, create the measurement ecosystem that modern regulation presupposes.

This is not an incremental improvement to existing evaluation practice. It is the missing measurement layer upon which the next era of AI governance must be built.